Compute at home, home lab, what ever you wish to call it. I have always had something that allows me to tinker and learn. It has changed from time to time but the crux of my needs are, its been more reliability focus.

In order to achieve this I went through early on the phase of enterprise hardware running everything, before realising the power consumption of that early 2000’era gear was costing some pretty big dollars . I then pivoted, through to the cloud (unsure if I can call this a home lab), consumer gear and now back to a somewhat modern and efficient enterprise server.

This post is about where I have landed, why I landed where I am, and why I think decommissioned enterprise hardware is the best low cost entry path for self-hosting that is leaps and bounds better than a domestic NAS or a mini PC (N150 / ThinClient)

Before I go any further, a disclaimer. My home lab is not a lab in the traditional sense. I am not spinning up and tearing down environments for certifications or learning new hypervisors. Yes, I still do this, but my home lab is about running real workloads for my household in a reliable manner. It doesn’t power down and because core functions (PPoE routing, Home Assistant, MQTT) run on it, when its offline broadly it will cause noise in my family

The big rocks in my house

- Frigate NVR watching 7 cameras.

- Home Assistant automating my house.

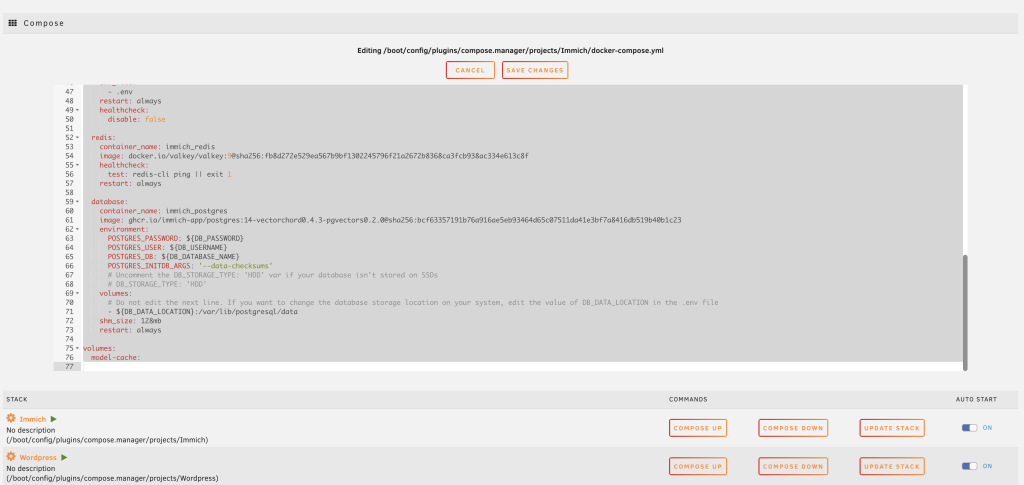

- Immich managing our family photos.

- Plex serving media.

- Mosquito my MQTT broker (400 messages per minute)

- OPNsense providing routing for my PPoE internet connection and DHCP.

- UpTimeKuma monitoring. I cant stress enough reliability, I want to be aware of issues before others are

The above is mainly for my family use, but this blog you are reading right now, is served from WordPress (Docker Compose). I class all of these as production workloads for my family and they need to be reliable.

That said, the setup does serve as a homelabe/testing purpose. I do try new things to bring continue my learning journey, for example I stood up an Openclaw instance (the internet would make you believe you need a Mac Mini), try Docker containers / VM’s along with general learning. But my primary function is reliability and the ability to run services.

Why Enterprise Hardware?

The cloud is great, but simply put is really expensive and can be limited. I would be spending probably 10-15k AUD each year to run my setup in Azure / AWS / GCP. But if you can look past costs, there are some things you can’t look past.

There are 3 laws of IOT, one of them being around latent sensitivity. Over the years I have learnt a few things. Workloads all have a particular shape, some are I/O bound (Frigate / Editing RAW images), some are CPU/TPU bound (Frigate) and some are just heavy (7 IP camera). There is a reason why all ‘serious’ IP Camera setups use a local NVR.

What this means is

- Latency Matters : IP Cameras is a prime example. Whilst having free access to AWS and Azure, running IP cameras in the cloud just doesn’t work without really fast internet pipes. My 50mb upload isn’t enough. These need to be ran locally, each camera is RTSP’ing close to 10megabits. I have 7 of these in my house. Thats approx 70 megabits. Even assuming you have a really fast upload to the cloud, there is internet weather to take in to account, plus the cost of all that I/O.

- CPU Grunt : Low power devices are nice, but it is a balance of quality of life. My rack of Raspberry PI 5’s are simply no match for heavy workloads (Immich, HomeAssistant, Frigate) that my family uses and 2 x low power semi modern Xeon’s make short work of them

- Cost To Buy : The cost of a modern NAS can be quite expensive. I paid $250 for a DL380 G9 (no rails) with 64GB of RAM. Simply put the compute is basically free. Storage is a universal given but compute is in effect given away. I will talk about Cost To Run below

- Reliability : Having lived in the HP & Cisco eco-system for 25+ years broadly speaking enterprise hardware is solid gear. If you are not a enteprise hardware guy. The DL380 is like the Toyota Camry, they are so common parts are not an issue. ECC Ram multiple PSU’s etc . Running OPNsense I need relaibility

My though process is really pretty simple and honed by looking after this type of equipment for te. Decommissioned data centre hardware is really cheap, purpose built to run 24/7 and in my opinion is a far better foundation than any consumer NAS appliance you can buy.

This is gear that comes out of data centres as organisations refresh their fleets. It was designed for continuous operation. It has features that consumer hardware simply does not offer. ECC memory protects against bit flips. Redundant power supplies keep you running during a PSU failure. Hot swap drive bays let you replace failed drives without downtime. Out of band management via iLO (Integrated Lights-Out) gives you remote console access even when the OS is unresponsive.

Having ran fleets of these in the past either for myself or past employers they just kind of work.

The hardware is worth almost nothing. A server that would have cost tens of thousands of dollars when new can be picked up on eBay for a few hundred dollars and that is what I did.

| Consumer NAS (Synology/QNAP) | Enterprise Server (e.g. DL380 G9) | |

|---|---|---|

| CPU | Celeron / Atom / Ryzen Embedded | Dual Xeon (16+ cores, 32+ threads) |

| RAM | 4-32GB (non-ECC typically) | 64-768GB ECC DDR4 |

| Drive Bays | 4-8 (typically 3.5″) | 8-25 depending on chassis |

| Remote Management | Basic web GUI | Full out of band (iLO / iDRAC) |

| Redundant PSU | Usually no | Yes |

| Hot Swap | Some models | Yes |

| Cost (secondhand) | $400-800 AUD used | $200-400 AUD |

| Noise | Low | Moderate to loud |

| Power | 30-60W | 150-250W |

Why You Should Not USe Enterprise Hardware?

I just told you why I like Enterprise Hardware (and I really do, and will conitinue to buy).

My approach on Enterprise Hardware may not be for you, it is for me, but it may not be for you. To be balanced here are a few points on why and to be honest I think if any of these are an issue, then a consumer product may be for you

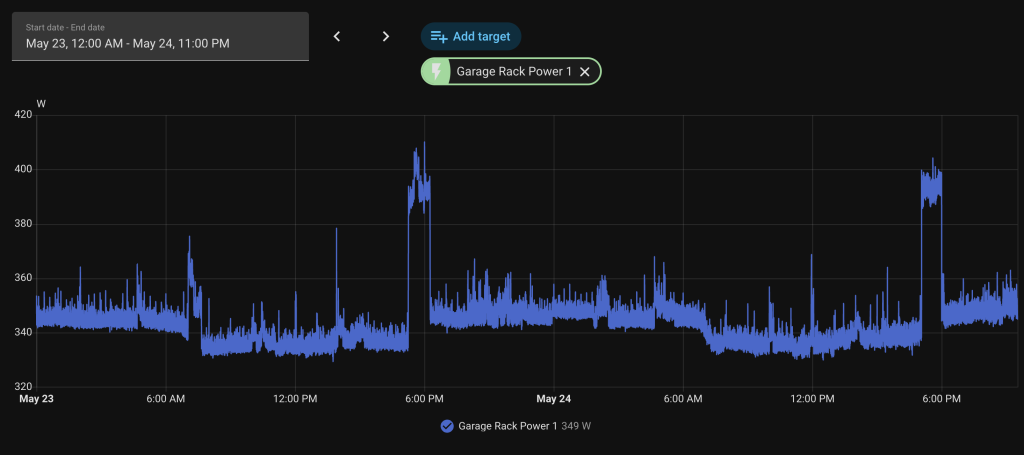

- Power Consumption : No sugar coating this, there is a cost to running any sort of gear like this. It has got a lot better, especially if you are looking at systems a few generations old (2018+) but I am consuming about 250watts 24×7. Without a home battery I am unsure I would have made the jump as I dont see the cost.

- Noise : These systems are far from silent. I do have this server in a 42 RU HP rack with a sealed door (the back is open) and its in a garage. The sound is not audible one bit in my scenario but if this device resided inside my living areas it would be a non starter

- Form Factor : Designed to be rack mounted, you need a rack.

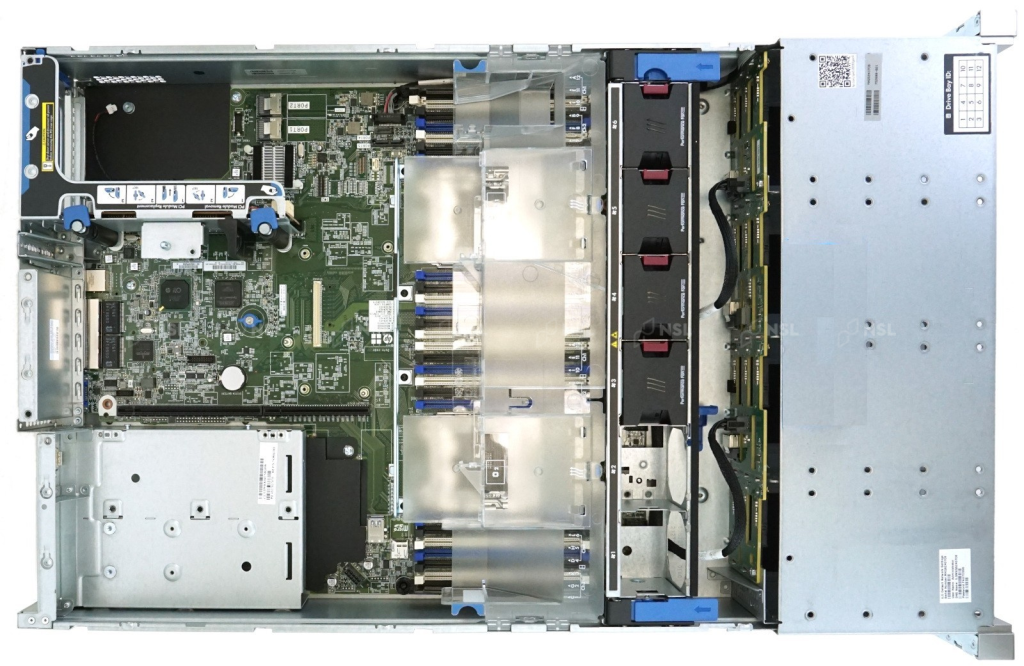

My Server – HP ProLiant DL380 G9 LFF

My server is a somewhat rare variant of the very common HP ProLiant DL380 Gen9. It is the LFF (Large Form Factor) model with 12 x 3.5 inch drive bays.

I looked for quite a while. There are hundred of DL380 G9’s available on eBay (even Facebook Marketplace) But almost all DL380 G9 units you will find are the SFF (Small Form Factor) model with 8 or 16 x 2.5 inch bays. I thought about models with 25 SFF bays but the cost of storage (and to a degree power) did not not compute. 3.5inch drives are simply just cheaper at scale.

The LFF variant gives me more raw storage capacity per bay so I can run 3.5″ disks, which is exactly what I wanted for large capacity spinning disks. But also with drive bay adapters I can and do convert these bays to run my 2.5 inch Samsung 870 Evo’s

I picked it up my unit for on eBay for $250 AUD. It came with 64GB of DDR4 ECC RAM and dual Xeon E5 v4 processors giving me 16 physical cores and 32 threads. It did not come with a storage controller which made it perfect, because I would not use it. The default HP Smart Array P440ar is a hardware RAID controller and for Unraid you want an HBA (Host Bus Adapter) that passes drives through directly to the OS. I installed a Broadcom LSI 9305-16i which gives me 16 ports of SAS3 connectivity with encpasulation support for SATA3 in to SAS3.

Taking power consumption off the table, compare this to Synology, U-Green, QNAP or even a N150 Intel machine , 32 threads of Xeon and 64GB of ECC RAM for $250 can not be matched

Storage — Hard Drives and SSDs <es / ServerPartDeals packaging / price comparison –>

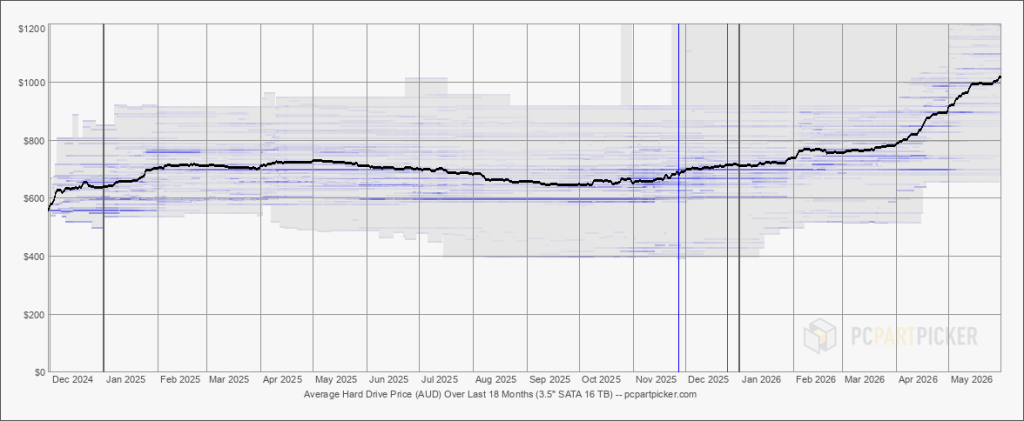

Storage was a decision I thought about for quite some time. Things that were top of mind was Performance, Cost and Resiliency but in a balance manner as after all the bulk of the costs are storage, and in many ways I think I got lucky here as the cost of storage continues to grow and grow.

e bulk of my costs are in storage. My aim was around 30TB of slow storage with Containers and VM’s on fast storage. The other key require

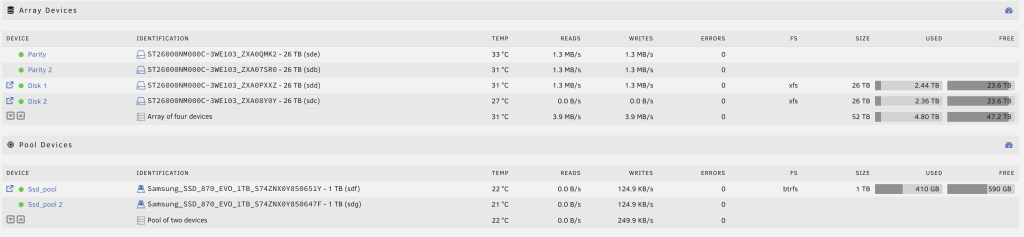

Array Devices

My main storage array is made up of 4 x 26TB Seagate Exos (ST26000NM000C) drives. Two are parity disks and two are data disks. This gives me 52TB of raw capacity with dual parity protection. These are enterprise drives designed for 24/7 operation in data centre environments with a 2.5 million hour MTBF rating.

I purchased these from ServerPartDeals in the United States and had them shipped to Australia. These are recertified drives — they have been pulled from service, tested, and given a new warranty by the reseller.

The cost story is significant. Each 26TB drive cost me approximately $500 AUD landed in Australia. The same capacity drive purchased locally from an Australian retailer would cost approximately $1,200 AUD. That is more than double the price. Across four drives I saved roughly $2,800 AUD.

The trade-off is warranty logistics. If a drive fails and I need to make a claim, I am shipping a drive back to the United States. That is not trivial. It adds cost, time and complexity compared to walking in to a local retailer with a receipt. To date I have had no issues with any of the drives, but the risk is something you need to be comfortable with.

| ServerPartDeals (Recertified) | Australian Retail (New) | |

|---|---|---|

| Price per 26TB drive | ~$500 AUD | ~$1,200 AUD |

| 4 drives total | ~$2,000 AUD | ~$4,800 AUD |

| Warranty process | Ship to US | Walk in to local store |

| Drive condition | Recertified, tested | Brand new |

| Risk | International warranty claim | Simple local return |

SSD Pool

For containers and virtual machines I run 2 x Samsung 870 EVO 1TB SSDs in a BTRFS pool. This is where Docker appdata, the Home Assistant VM disk and all the I/O intensive workloads live. SSDs on the faster pool, slower spinning disks for bulk media storage. The SSD pool sits at 116GB used with 883GB free, which gives me plenty of headroom.

The SSDs sit in 2.5 inch to 3.5 inch adapter trays (HP 661914-001 style) mounted in the LFF bays alongside the Seagate spinners.

Issues

I could tell you this has been roses but I have ran in to what I would like to think are just some teething issues. As I type this I have had about 3 months of solid reliability

- VM / Container Networking

Given a reboot of Unraid is a 20 minute saga (if you have not owned an Enterprise Server, they take 10 minutes often to just POST) I seldom reboot. Rebooting breaks a lot of things. The two services that cause the most noise is firstly OpnSense providing internet and secondly Mosquitto being my MQTT broker. If either go offline there is generally unhappy people. After about 1 months of uptime Home Assistant was restarting constantly. If you are familiar with Home Assistant you will know Home Assistant runs a container engine in the VM. What was happening was the Docker container inside the VM would constantly reboot. Under load (basically anything) it would reboot. I could not restore a backup as it would cause a reboot. I broke my Home Assistant VM trying to debug. I created a new VM and had the same issue. A heap of time spent debugging.

In the end a reboot and a Unraid update fixed the problem. Kind of disappointing but I have monitoring now to detect this behaviour in Uptime Kuma. - SCSI Queue Depth

This is worth documenting because it took a while to figure out and if you are running consumer SATA SSDs behind a SAS HBA you may hit the same issue and perhaps its a combination of Unraid, my SAS controller and my drives.

Shortly after building the system I started seeing intermittent SCSI task aborts and hardware resets on both Samsung 860 EVOs. The symptoms were ugly —sd 1:0:4:0: [sdf] tag#XX FAILEDmessages in dmesg, BTRFS device stats showing I/O errors, and occasional complete drive timeouts under sustained load. The 26TB Seagate HDDs on the same controller and same backplane had no issues whatsoever.

I went through the usual diagnostic path. Reseated cables. Moved drives to different backplane slots. Checked SMART data (clean). Replaced cables with really expensive ones I even bought new disks due to the SMART counters on the 860 EVO’. None of it helped. The errors followed the SSDs, not the slots or cables.

What I realised was I could replicate this. It was under heavy I/O load. Short bursts were fine. But when I used a harness to copy 50GB of sequential writes I would get errors. The 26TB HDDs never had this problem because they are mechanically slower — they max out at around 200MB/s and naturally drain the command queue. The SSDs were completing commands in 0.1ms and flooding the SAS-to-SATA translation layer.

The fix in the end was really simple but it took weeks, dollars and time to get there. I reduce the SCSI command queue depth from the default of 32 to 1.

The root cause is a timing mismatch in the SAS-to-SATA protocol translation. The LSI 9305-16i is a SAS3 HBA. My Samsung 870 EVOs are SATA drives. When a SATA drive sits behind a SAS HBA, the communication goes through STP (SATA Tunneling Protocol). Enterprise SATA drives like the Seagate Exos are designed and tested for this environment. Consumer SSDs like the Samsung 870 EVO are optimised for direct AHCI connections and can get overwhelmed when the HBA aggressively queues 32 concurrent commands through the STP translation layer.

Queue depth 1 means the HBA sends one command at a time, waits for a response, then sends the next. Peak sequential throughput dropped from around 550MB/s to around 250MB/s. For my workloads — Docker containers starting, database queries, Frigate writing clips, Home Assistant doing thousands of small I/O operations — the difference is imperceptible. These workloads are latency sensitive, not throughput sensitive, and SSDs at queue depth 1 still deliver 0.1ms latency versus 10ms for a spinning disk.

A $0 fix. No new hardware required. Made permanent in Unraid’s/boot/config/gofile so it persists across reboots.

echo 1 > /sys/block/sdf/device/queue_depth

echo 1 > /sys/block/sdg/device/queue_depth

Unraid.

The bggest decision I needed to make when building this platform wasn an OS. I needed the ability to run VM’s, Containers and provide SMB/NFS storage.

I evaluated, Proxmox & TrueNAS both of which I would say are the best in their respective domains But it came back to the best all round for my needs.

The big selling point to me, Unraid lets me add disks of different sizes without having to rebuild arrays. It gives me Docker support out of the box. It runs VMs through KVM/QEMU. It has a community plugin ecosystem that fills in the gaps. The web GUI is good enough for day to day management and when and of course I can use SSH and if we are being honest, its hard to escape SSH for debugging and attaching to Docker containers.

Unraid uses the LSI controller in pass through mode. There is no hardware accelerated RAID this is all done in software.

I did mention the ability to mix and match disk sizes, it is a good feature but there are limitiations. The big limitation not called out is your I/O performance is limited to a single disk. In my scenario I have 4 x 26TB SATA disks in a Raid Z-2 configuration. My read performance is not of 4 disks, it i actually of just one disk, so if you are chasing I/O performance

Unlike traditional RAID (which stripes data across multiple disks), the main Unraid array stores each file entirely on a single data disk. This means write speeds are restricted to the maximum speed of that individual drive

You can use approaches that work around this such as writing to SSD and then moving to slower storage in the background (Mover) but to me this add’s complexity and I have chosen to have a RAID Z2 + a 2 drive BTRFS mirror.

If you need hundreds of megabytes of I/O using mechanical storage, then simply put this is not the right solution for you.

On the plus side of things, given this is not RAID and my data is stored on and individual data disk, it means should I need to place this disk is another system, I should be able to read with any other Linux distribution.

Let me just say it has been ‘pretty’ good but there have been two issues in running in 6 month that I am not pretty certain wouldnt have happened on eother TrueNas or Proxmox. and whilst I cant be certain they wouldn’t have happened elsewhee, I assume on more battle hardned platforms

Workloads

Workloads can be broken down in to 3 distinct areas

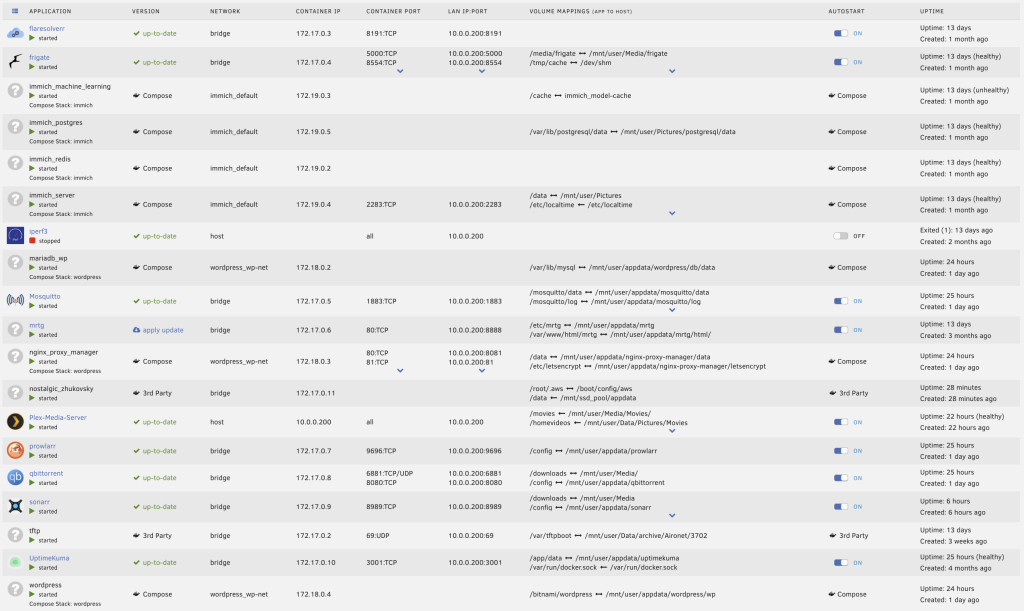

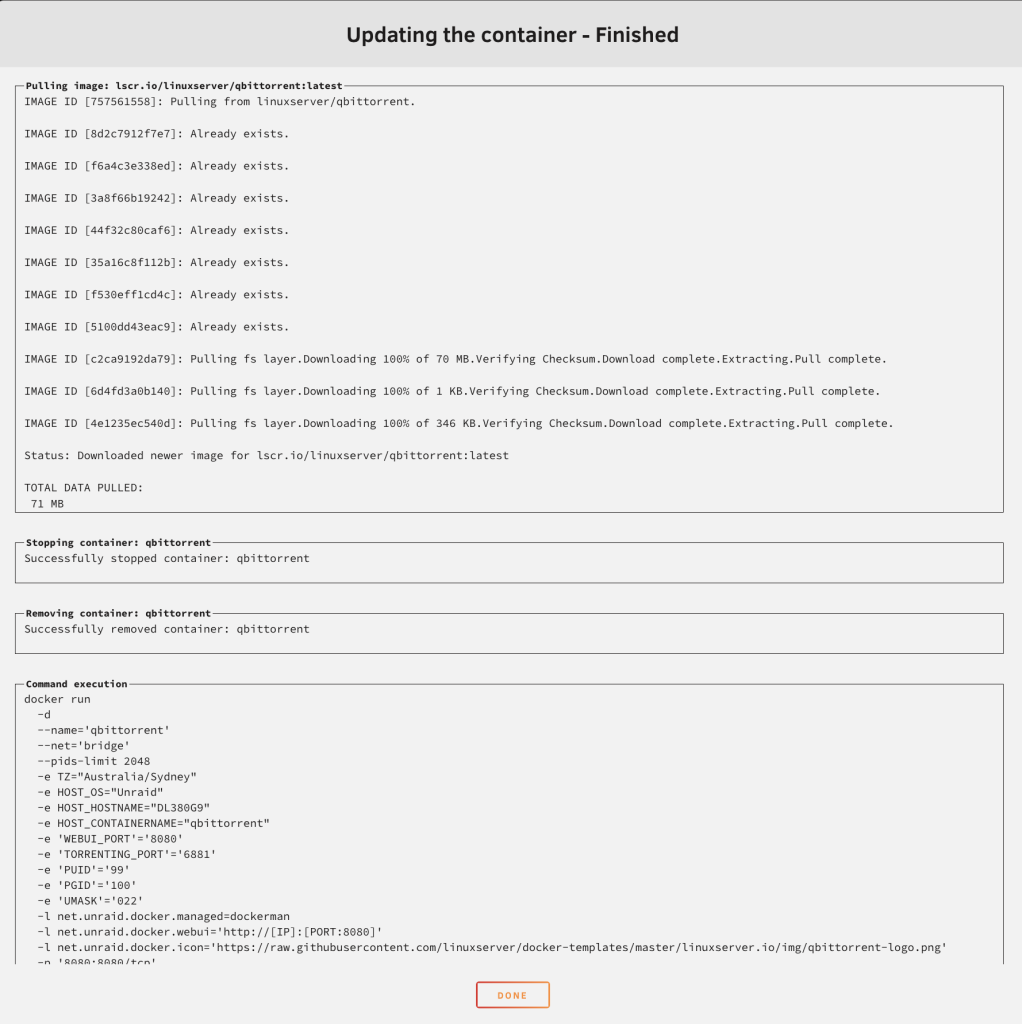

1. Docker Containers

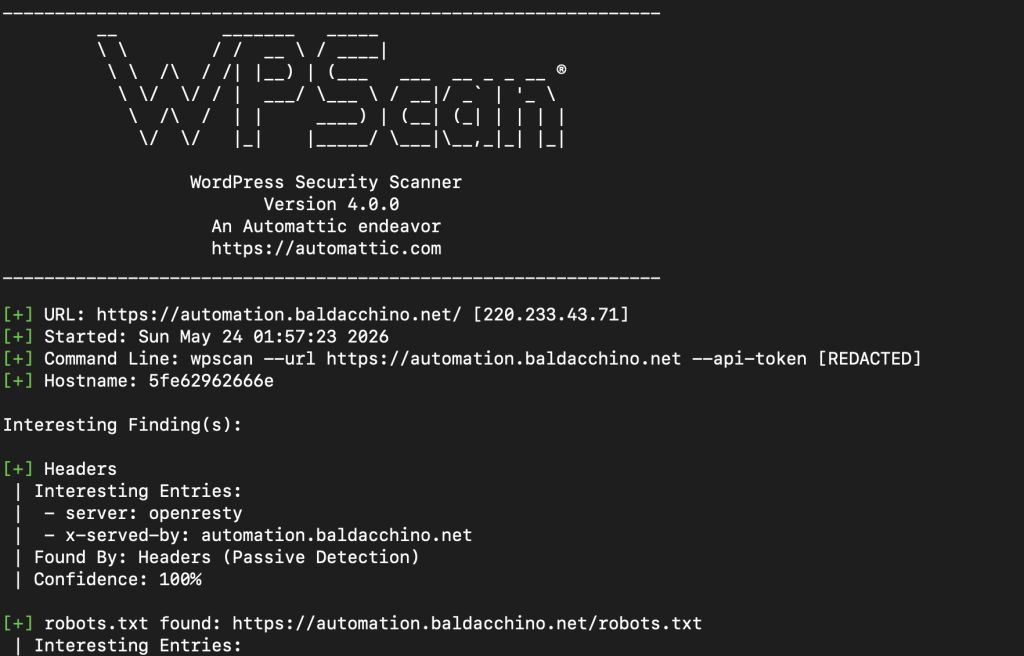

These days a lot of software is delivered via Docker. From WPScan through to TFTP and beyond, this is the way I manage most things. Recently I needed a TFTP server to configure some Cisco gear, the solution is docker. Every container listed above has storage mapped back to Unraid’s File System. I use my BTRFS Samsung 870 Evo’s are storage. Its a container and it needs to be fast.

root@DL380G9:~# docker ps -a --format "table {{.Names}}\t{{.Status}}"

NAMES STATUS

mosquitto Up 12 days

prowlarr Up 12 days

sonarr Up 12 days

qbittorrent Up 12 days

Plex-Media-Server Up 12 days (healthy)

tftp Up 12 days

immich_server Up 12 days (healthy)

immich_postgres Up 12 days (healthy)

immich_machine_learning Up 12 days (unhealthy)

immich_redis Up 12 days (healthy)

wordpress Up 12 days

nginx_proxy_manager Up 12 days

mariadb_wp Up 12 days

flaresolverr Up 12 days

frigate Up 11 days (healthy)

iperf3 Exited (1) 12 days ago

mrtg Up 12 days

UptimeKuma Up 12 days (healthy)

root@DL380G9:~#

- Frigate NVR — 7 cameras, object detection offloaded to a Google Coral USB TPU, recording and re-streaming via FFmpeg. Frigate on this hardware versus the Raspberry Pi 5 it was previously on is night and day. The Pi was always at the edge of its capabilities. On the DL380 G9, Frigate barely registers.

- Immich — Photo and video management for the family. Machine learning for face recognition and smart search. This was the workload that killed the Synology DS918+. On the DL380 it runs alongside everything else and the machine learning indexing that used to take hours completes in a fraction of the time.

- Plex Media Server — Media streaming. No dedicated GPU in this machine but between 32 threads of Xeon I can handle transcoding when needed. It is not perfect but it is suitable for my needs.

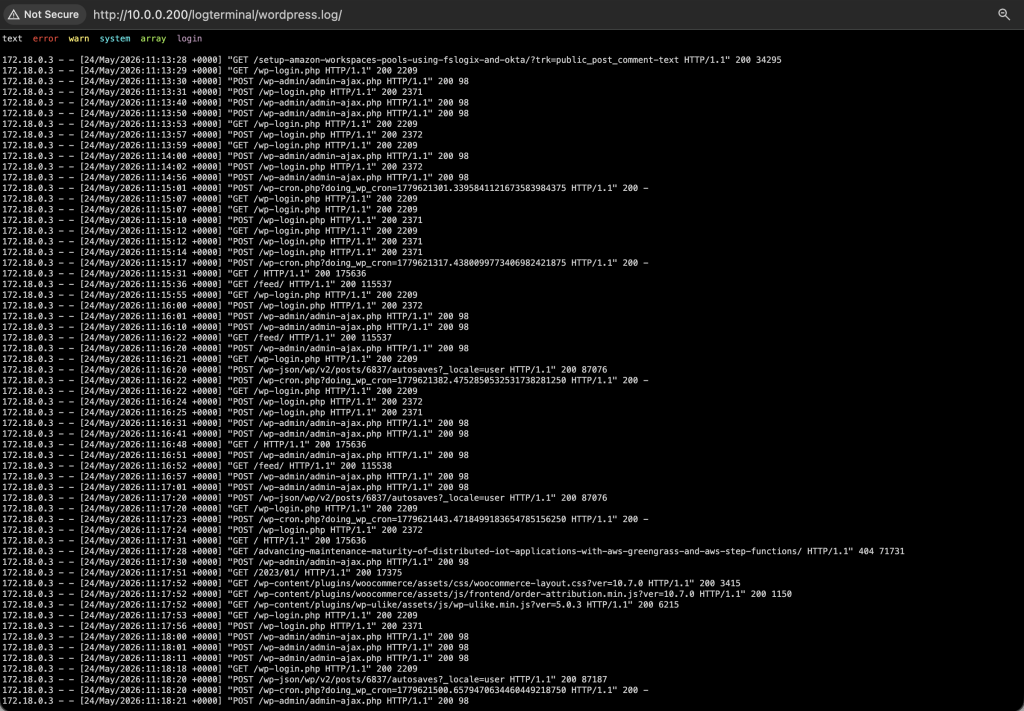

- WordPress + MariaDB + Nginx Proxy Manager — This blog. Bitnami WordPress image, MariaDB 11.8 with tuned InnoDB settings, Nginx Proxy Manager handling TLS termination with Let’s Encrypt certificates.

- Mosquitto — MQTT broker at 10.0.0.200:1883. The backbone of my home automation, bridging Home Assistant, my Arduino Giga R1, Tasmota devices and various sensors.

- MRTG — Multi Router Traffic Grapher. Old school but it works. SNMP polling my Cisco 3850 switch and autonomous mode Cisco 3702i access points, graphing traffic and utilisation over time.

- Uptime Kuma — Monitoring dashboard tracking the availability of all my services.

- Sonarr + Prowlarr + qBittorrent + FlareSolverr — The *arr stack for managing TV show libraries. Sonarr handles series tracking and automation, Prowlarr aggregates indexers, qBittorrent is the download client and FlareSolverr sits in front to handle Cloudflare challenges that would otherwise block indexer queries. Media lands in

/mnt/user/Media/TVand is picked up by Plex automatically. - TFTP – Trivial File Transfer Protocol, this is what I use for managing networking device configuation. My house is based on Cisco gear and its an easy way to version control my configuration.

The following containers are invoked on demand.

- AWS CLI – I use AWSCLI in a container, to perform backups.

- Speedtest – A means to run Speedtest via Docker.

- WPScan – WordPress Vulnerability Scanner, whilst I keep this website updated. Plugins are not updated via Docker Compose Update and are the biggest issue in keeping WordPress safe and secure.

Virtual Machines

- Home Assistant OS — Running as a full VM with 8 vCPUs and 8GB RAM. The VM has a 32GB virtual disk on the SSD pool and access to two virtual network interfaces. Home Assistant is the brain of my house — it controls roller shutters, ceiling fans, lighting, monitors my Sigenergy solar and battery system, manages automations and provides dashboards for everything.

- OPNsense — Running as a VM with 4 vCPUs and 4GB RAM. This is the single most critical workload on the entire server. OPNsense terminates my PPPoE connection to my ISP, handles DHCP for every device on my network, runs DNS resolution, manages firewall rules across VLANs and provides NAT for the entire house. I have a Ubiquit EdgeRouter 10X in the rack that is disconnected and configured, if I need to do maintenance I simply power this unit on and my house continues with internet limited to 350 megabits (CPU bound)

Under normal operations the system sits at around 20% CPU utilisation. That is with all 7 cameras streaming, object detection running, Immich indexing, WordPress serving requests, Home Assistant processing automations and OPNsense routing every packet in and out of the house. The machine has more headroom now than it has ever had.

The Rack

The DL380 G9 is one piece of a broader ecosystem in my garage rack. <!– IMAGE: Full rack photo showing all the gear –>

Show Image The rack — server, switching, patching, routing and power conditioning

- Cisco Catalyst 3850 — My core switch. 48 port PoE+ providing connectivity and power to access points and other devices throughout the house. Yes it is overkill for a house. No I do not care.

- HP Patch Panel — Clean cable management from the structured cabling throughout the house back to the switch.

- Ubiquiti EdgeRouter 10X [Backup] — Power Off – Used to deal with all routing, DHCP, Firewall and PPoE but was replaced by OPNsense and is unable to provide more than 350megabits per second.

- TrippLite LR200 — Line conditioner providing power conditioning to the rack. Clean power matters when you are running enterprise hardware 24/7.

- Raspberry Pi 5 — Still in the rack. This handles serial connections to my PLC and Arduino Giga R1. Why a separate Pi instead of connecting the Arduino directly to the Unraid server? Because Unraid maps USB devices by their device ID and the Arduino Giga R1’s USB HID descriptor changes depending on what state the board is in (normal operation, bootloader mode, reset state). Every time the Arduino resets or enters bootloader mode the USB device effectively disappears and reappears as a different device from Unraid’s perspective. The Pi running a lightweight serial bridge over the network sidesteps this entirely.

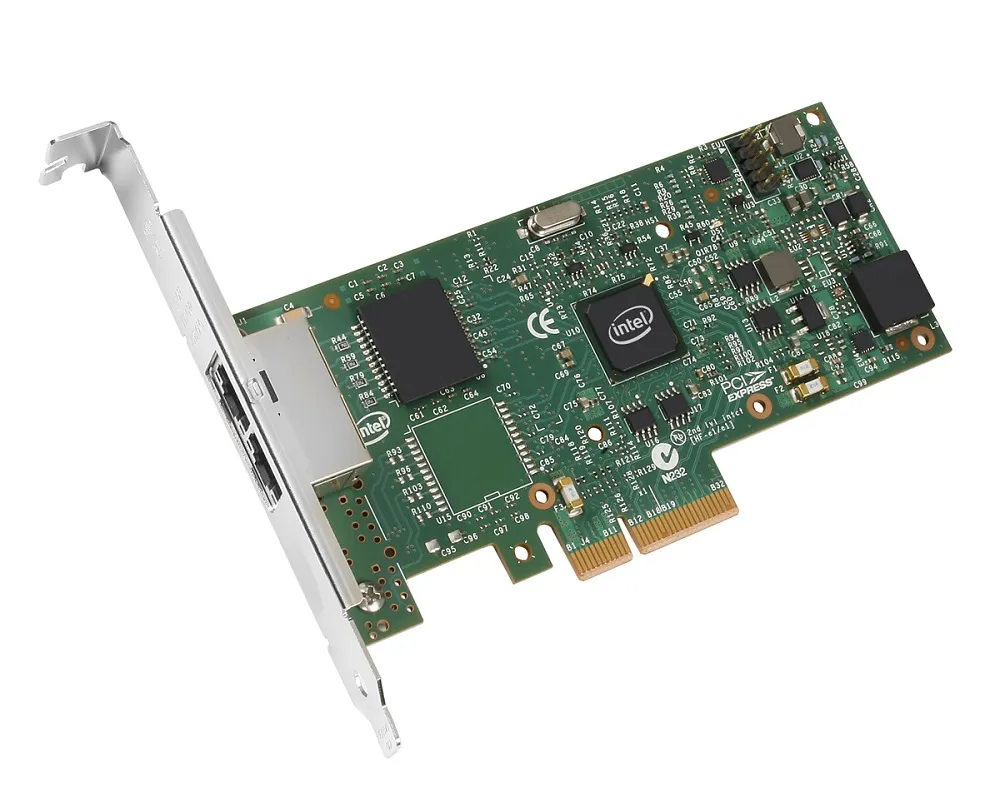

- LACP — I have LACP (Link Aggregation Control Protocol) configured between the DL380 G9’s quad port Broadcom BCM5719 NICs and the Cisco 3850. Do I need the bandwidth? No. Does a single gigabit link handle everything? Probably. But LACP gives me link redundancy and I had the ports, so why not.

- Cisco 3702i Access Points — Four of them in autonomous mode throughout the house, monitored via SNMP with MRTG.

Power Efficiency

The DL380 G9 draws approximately 200 watts at the wall under normal load. That is the server alone, not including the Cisco 3850 switch, Pi and other rack equipment (PLC, Arduino) add to this total. The spikes you can see if my garden lights turning on at night time which are driven but a single 60Amp 12v DC supply with everything coming from a single GPO (General Purpose Outlet). Whilst I cold get more granular my usage is around 200w.

For context, 200 watts continuously is roughly 1,752 kWh per year. At Australian electricity rates that is a meaningful number. But consider what I am getting for that 200 watts — a 7 camera NVR with object detection, a photo management system with machine learning, a media server, a home automation platform, a web server, a NAS, monitoring, and MQTT brokering. On consumer hardware you would need multiple devices to cover these workloads and the aggregate power draw would likely be similar if not higher.

The Sigenergy battery system also helps here. During daylight hours the server is running on solar and during the evening it draws from the 40kWh battery stack. To date, whilst I have not completed a whole year with my battery, I have yet to pull from the grid.

Quality of Life

The difference in day to day experience moving from the Synology DS918+ and Raspberry Pi combination to this server has been significant.

Frigate on the Pi was usable but it was always on the edge. Detection latency was noticeable, recordings occasionally had gaps during high activity periods, and any maintenance on the NFS connection would take the NVR offline. On the DL380 G9 with the Google Coral for detection and local SSD storage for recordings, Frigate is rock solid. 7 cameras, all detecting, all recording, and it barely makes a dent in the CPU.

Immich could not even run on the DS918+. The machine learning models for face recognition and CLIP-based smart search need proper compute. On the DL380, the initial library indexing tore through our photo collection and ongoing indexing of new photos is near instant. The search functionality alone — being able to type “beach” or “birthday” and have it find the right photos — is something my family actually uses daily.

Even WordPress is noticeably faster. MariaDB queries against the local SSD pool, Nginx serving pre-compiled static HTML, all of it runs with the kind of I/O headroom that you just cannot get on consumer hardware. I am getting 75ms TTFB (Time To First Byte) on this blog, served from my garage.

Closing Thoughts

If you are considering self-hosting and you have a space where noise is not an issue — a garage, a shed, a basement, a dedicated cupboard — enterprise hardware is the move. The gear is worth almost nothing on the secondhand market. A DL380 G9 for $250 AUD gives you dual Xeon processors, 64GB of ECC RAM, hot swap bays, redundant power supplies, out of band management and a chassis designed to run for years without intervention.

It is not perfect. There is no dedicated GPU in my system which means I cannot do hardware accelerated transcoding in Plex or run local LLMs efficiently. The power draw is higher than a consumer NAS. The fan noise rules out keeping it in a living space. And as I documented above, running consumer SSDs behind a SAS HBA can introduce quirks that take some effort to diagnose.

But for what I need — reliable, consolidated, high performance self-hosting for my family’s workloads — this DL380 G9 running Unraid is the best platform I have ever run. It has more headroom than anything I have had before. It does everything I need. And it cost me less than a mid-range Synology.

Enterprise hardware. It is not glamorous, but it works.